NAVSIM: Data-Driven Non-Reactive Autonomous Vehicle Simulation and Benchmarking

:page_facing_up: Read on arXiv

Overview

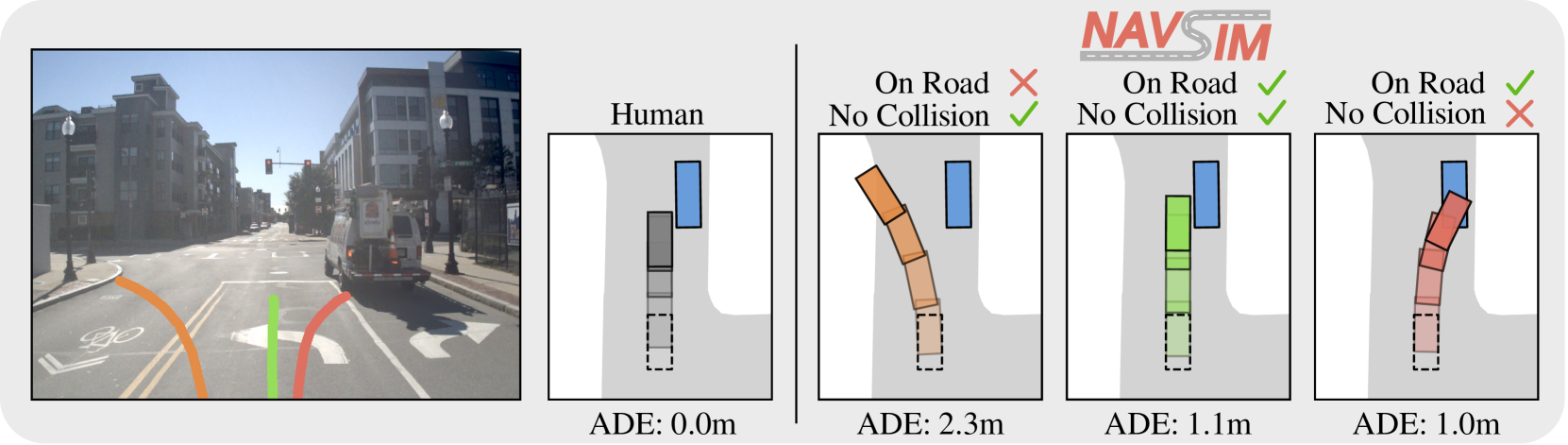

Autonomous vehicle evaluation has long been split between two unsatisfying extremes: open-loop metrics that replay logged trajectories and compare predicted paths against human reference (fast but unreliable), and closed-loop simulation that renders full sensor observations at each step (accurate but computationally prohibitive and plagued by sim-to-real domain gaps). NAVSIM, by Dauner, Li, Yang et al. (University of Tubingen, Shanghai AI Lab, NVIDIA Research, Robert Bosch, University of Toronto, Stanford), introduces a non-reactive simulation paradigm that bridges this gap. The driving policy is queried once at scene initialization to generate a 4-second planned trajectory, and a kinematic bicycle model propagates the vehicle forward at 10Hz to evaluate consequences in a bird's-eye-view abstraction -- without expensive sensor re-rendering.

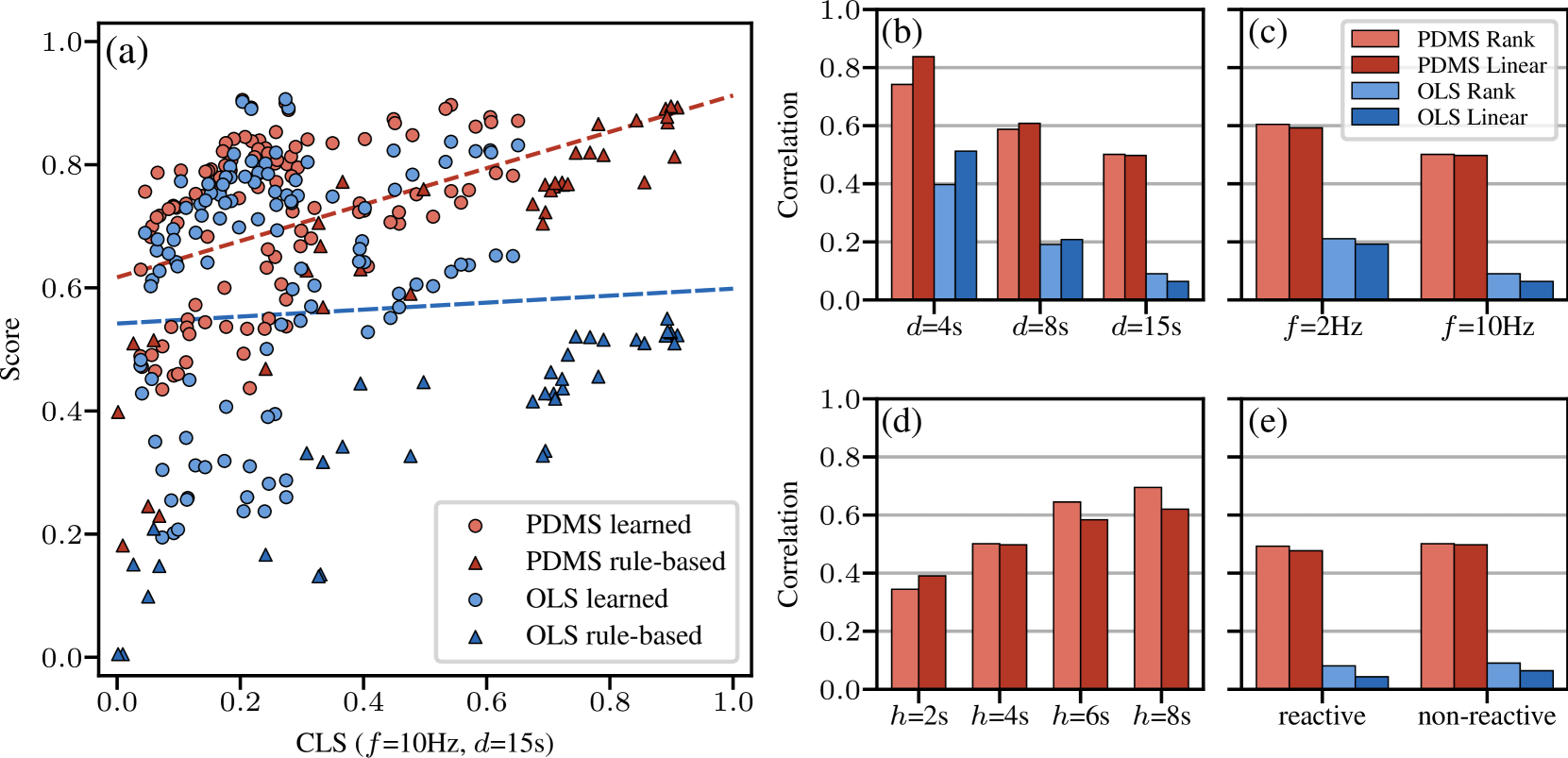

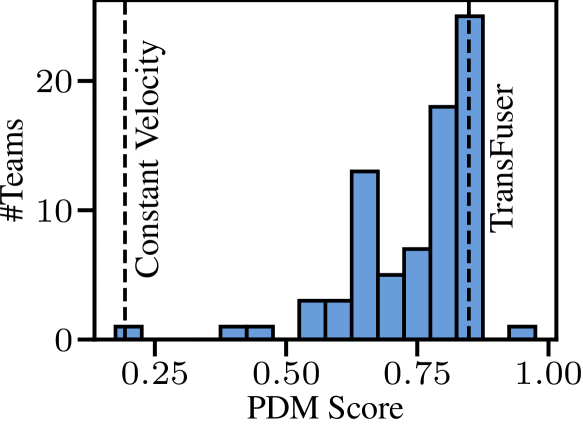

The paper's central empirical finding is that its proposed evaluation metric, PDM Score (PDMS), achieves 0.7--0.8 correlation with closed-loop simulation scores, while traditional open-loop metrics (e.g., Average Displacement Error) achieve only 0.2--0.5 correlation. This validates the non-reactive assumption for short-horizon evaluation and provides a computationally tractable yet meaningful benchmark. NAVSIM also introduces a principled data curation strategy that filters out trivially easy and unsolvable scenarios from nuPlan, yielding a challenging subset where high performance requires genuine perception and planning. The CVPR 2024 NAVSIM Challenge attracted 143 teams and 463 submissions, rapidly establishing the benchmark as a community standard for end-to-end driving evaluation.

A key insight from the benchmark results is that complex multi-module architectures (UniAD at 83.4% PDMS trained for ~240 GPU-days, PARA-Drive at 84.0% PDMS trained for ~240 GPU-days) achieve only marginal gains over simpler architectures like TransFuser (84.0% PDMS trained in 1 GPU-day), suggesting potential over-engineering in the field. Even the best models trail human expert performance (94.8% PDMS) by roughly 10 percentage points, highlighting substantial remaining challenges.

Key Contributions

- Non-reactive simulation paradigm: Bridges open-loop and closed-loop evaluation by querying the policy once, then simulating consequences via an LQR controller and kinematic bicycle model in BEV -- no sensor rendering required

- PDM Score (PDMS): A hierarchical evaluation metric combining multiplicative safety penalties (collision, drivable area compliance) with weighted performance metrics (ego progress, time-to-collision, comfort), achieving 0.7--0.8 correlation with closed-loop simulation

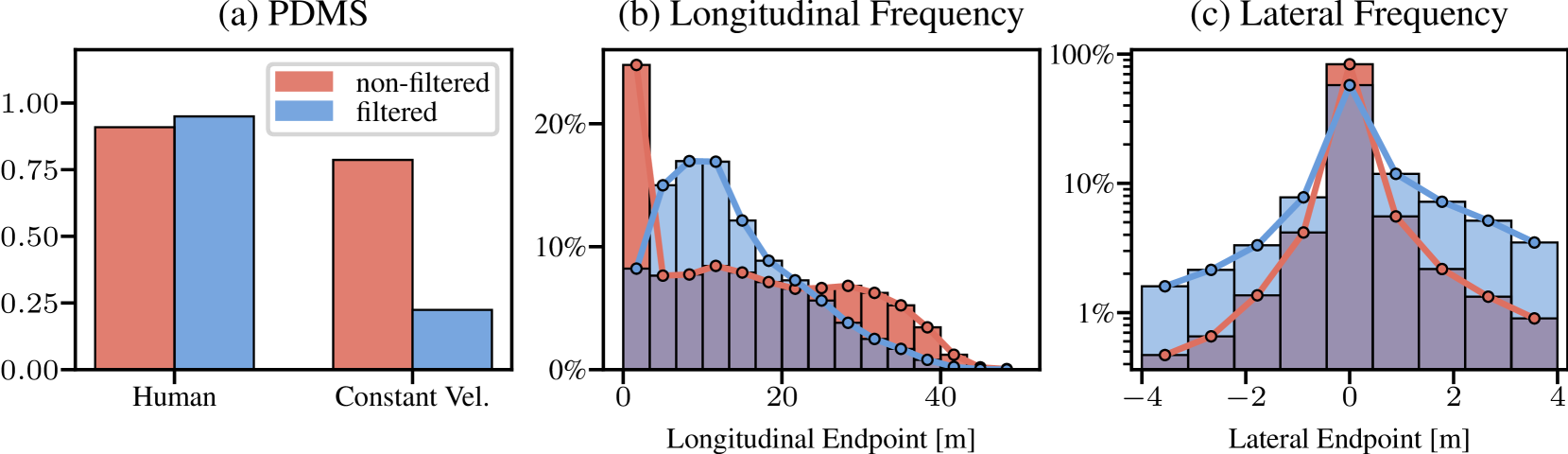

- Principled data curation: Filters nuPlan scenarios to remove trivially easy cases (constant velocity PDMS > 0.8) and annotation errors (human reference PDMS < 0.8), ensuring benchmarked scenarios are challenging yet solvable

- Large-scale benchmarking: Evaluates a suite of driving planners (from ego-status MLPs to UniAD-class multi-module systems) on a standardized benchmark, revealing that architectural complexity does not reliably translate to performance gains

- Community adoption: The CVPR 2024 challenge demonstrated real-world impact, with the winning entry (Hydra-MDP) reviving classical trajectory-sampling approaches

Architecture / Method

┌───────────────────────────────────────────────────────────┐

│ Scene Initialization │

│ Multi-camera images + Ego state + HD Map │

└─────────────────────────┬─────────────────────────────────┘

│

▼

┌───────────────────────────────────────────────────────────┐

│ Driving Agent (queried once) │

│ Outputs: 4-second planned trajectory │

└─────────────────────────┬─────────────────────────────────┘

│

▼

┌───────────────────────────────────────────────────────────┐

│ LQR Controller │

│ Converts waypoints ──► steering + acceleration │

└─────────────────────────┬─────────────────────────────────┘

│

▼

┌───────────────────────────────────────────────────────────┐

│ Kinematic Bicycle Model (10 Hz) │

│ Propagates ego vehicle ──► simulated trajectory │

└─────────────────────────┬─────────────────────────────────┘

│

▼

┌───────────────────────────────────────────────────────────┐

│ PDM Score │

│ ┌─────────────────────┐ ┌─────────────────────────────┐ │

│ │ Safety (multiply) │ │ Performance (weighted avg) │ │

│ │ - No Collision │ │ - Ego Progress │ │

│ │ - Drivable Area │ │ - Time-to-Collision │ │

│ └─────────┬───────────┘ │ - Comfort │ │

│ │ └──────────────┬──────────────┘ │

│ └──────── PDMS = NC*DAC * w_avg(EP,TTC,C) ────┘ │

└───────────────────────────────────────────────────────────┘

Non-Reactive Simulation Pipeline

NAVSIM operates on the OpenScene dataset (a public redistribution of nuPlan). At evaluation time:

- The driving agent receives sensor observations (multi-camera images + ego state) at scene initialization

- The agent outputs a planned trajectory of 4 seconds

- An LQR controller converts the planned waypoints into steering and acceleration commands

- A kinematic bicycle model propagates the ego vehicle at 10Hz, producing a simulated trajectory

- The simulated trajectory is scored against the recorded scene using the PDM Score

The "non-reactive" assumption means other agents follow their recorded trajectories regardless of the ego vehicle's actions. While this cannot capture multi-agent interaction effects, the paper shows it provides strong correlation with reactive closed-loop evaluation for the 4-second horizon.

PDM Score

The PDMS combines sub-metrics hierarchically:

Safety penalties (multiplicative): - No Collisions (NC): Full penalty for dynamic agent collisions; partial penalty (0.5) for static objects - Drivable Area Compliance (DAC): Full penalty for driving outside designated road areas

Performance metrics (weighted average): - Ego Progress (EP): Route advancement normalized by a safe upper bound - Time-to-Collision (TTC): Safe margins to surrounding vehicles - Comfort (C): Trajectory smoothness evaluated via acceleration and jerk thresholds

The combined formula: PDMS = NC * DAC * weighted_average(EP, TTC, C)

Data Curation

The benchmark addresses the problem of trivial scenarios through two-sided filtering: - Remove too-easy scenarios: If a constant-velocity baseline achieves PDMS > 0.8, the scenario does not require intelligent perception or planning - Remove unsolvable scenarios: If the human reference trajectory scores PDMS < 0.8, the scenario likely has annotation errors or is inherently ambiguous

This produces the navtrain (103,000 samples) and navtest (12,000 samples) splits.

Results

PDMS Correlation with Closed-Loop Simulation

PDMS achieves 0.7--0.8 correlation with closed-loop simulation scores across various planning durations and query frequencies, validating the non-reactive assumption. Traditional open-loop metrics (ADE, FDE) achieve only 0.2--0.5 correlation.

Benchmark Results

| Model | PDMS | Training Compute |

|---|---|---|

| Human Expert | 94.8% | -- |

| TransFuser | 84.0% | 1 GPU-day |

| PARA-Drive | 84.0% | 240 GPU-days |

| Latent TransFuser | 83.8% | -- |

| UniAD | 83.4% | 240 GPU-days |

| Ego Status MLP | 65.6% | -- |

| Constant Velocity | 20.6% | -- |

Key observations: - Complex architectures (UniAD, PARA-Drive) do not outperform simpler models (TransFuser) despite orders-of-magnitude more compute - Even the best models trail human experts by ~10 percentage points - The ego-status MLP baseline (65.6%) reveals how much can be achieved without any visual perception, echoing the findings of Is Ego Status All You Need For Open Loop End To End Autonomous Driving

CVPR 2024 Challenge Results

- 143 teams, 463 submissions

- Winner: Hydra-MDP -- extended TransFuser with trajectory sampling and scoring, reviving classical planning approaches

- Runner-up: Vision Language Model (VLM) approach, reflecting growing interest in foundation models for driving

Limitations & Open Questions

- Non-reactive assumption: Cannot capture compounding errors or the ego vehicle's influence on other agents over longer horizons. The 4-second evaluation window limits assessment of long-horizon planning

- Traffic rule coverage: The current metric does not evaluate all traffic rules (e.g., stop signs, traffic lights, fuel efficiency)

- Dataset bias: Built exclusively on nuPlan data, which may not represent all driving conditions and geographies

- Complexity vs. performance paradox: The finding that simple models match complex ones may reflect benchmark limitations rather than genuine architectural parity -- longer horizons or more complex scenarios might differentiate architectures

- Future direction: NAVSIM v2 (Navsim V2 Pseudo Simulation For Autonomous Driving) addresses several of these limitations through pseudo-simulation with 3D Gaussian Splatting, enabling multi-step evaluation with compounding error capture

Connections

Related papers in the wiki: - Navsim V2 Pseudo Simulation For Autonomous Driving -- direct successor that extends NAVSIM with pseudo-simulation via 3D Gaussian Splatting for multi-step closed-loop-like evaluation - Is Ego Status All You Need For Open Loop End To End Autonomous Driving -- exposed weaknesses of open-loop nuScenes evaluation, motivating NAVSIM's non-reactive simulation approach - Carla An Open Urban Driving Simulator -- the primary closed-loop simulation benchmark that NAVSIM aims to complement with cheaper evaluation - Nuscenes A Multimodal Dataset For Autonomous Driving -- standard open-loop benchmark whose limitations NAVSIM addresses - Transfuser Imitation With Transformer Based Sensor Fusion For Autonomous Driving -- key baseline that achieves competitive performance on NAVSIM despite simplicity - Planning Oriented Autonomous Driving -- UniAD, complex multi-module system benchmarked on NAVSIM - Para Drive Parallelized Architecture For Real Time Autonomous Driving -- parallel E2E architecture benchmarked on NAVSIM - Diffusiondrive Truncated Diffusion Model For End To End Autonomous Driving -- reports 88.1 PDMS on NAVSIM - Goalflow Goal Driven Flow Matching For Multimodal Trajectory Generation -- reports 90.3 PDMS on NAVSIM - Planning -- broader context on planning evaluation challenges