Visual Instruction Tuning (LLaVA)

Overview

Large language models transformed NLP through instruction tuning -- training on diverse instruction-response pairs so models follow human intent across tasks. Visual Instruction Tuning extends this paradigm to the multimodal domain, introducing LLaVA (Large Language and Vision Assistant), the first general-purpose visual and language instruction-following model built by connecting a pretrained CLIP vision encoder with a large language model (Vicuna) through a simple linear projection layer. The paper demonstrates that the instruction-following capabilities of LLMs can be unlocked for visual tasks with surprisingly little architectural complexity, provided the training data captures the right kind of visual reasoning.

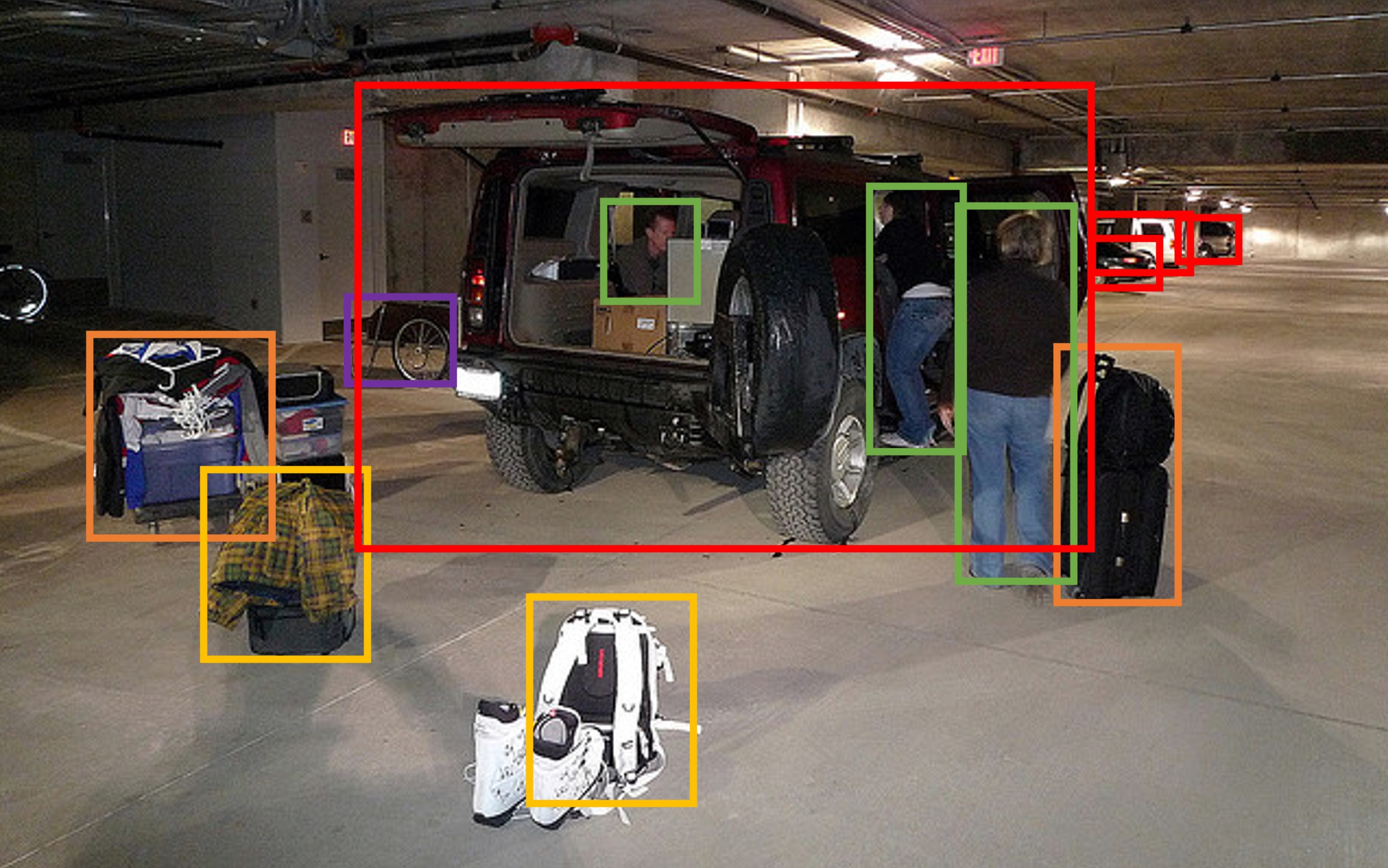

The core insight is a novel GPT-assisted data generation pipeline that creates 158K multimodal instruction-following samples from existing image caption and bounding box annotations (from COCO). Rather than collecting expensive human-annotated instruction-response pairs for images, the authors feed symbolic visual representations (captions and object locations) to GPT-4 as a "teacher" to generate three types of instruction data: multi-turn conversations (58K), detailed image descriptions (23K), and complex visual reasoning chains (77K). This data, despite being generated without GPT-4 actually seeing the images, is sufficient to teach a vision-language model to follow diverse visual instructions.

LLaVA achieves an 85.1% relative score compared to GPT-4 on a synthetic multimodal benchmark and reaches 92.53% state-of-the-art accuracy on ScienceQA through a GPT-4 judge ensembling strategy. The paper's significance extends far beyond its benchmark numbers: LLaVA established the dominant recipe for open-source multimodal models -- connect a frozen CLIP encoder to an LLM via a lightweight projection, generate instruction-tuning data using a stronger model, and train in two stages. This recipe was adopted and refined by dozens of subsequent models (LLaVA-1.5, LLaVA-NeXT, InternVL, Qwen-VL, and many driving/robotics VLMs).

Key Contributions

- GPT-assisted multimodal instruction data generation: A scalable pipeline that converts existing image annotations (captions + bounding boxes) into 158K diverse instruction-following samples across conversation, detailed description, and complex reasoning categories -- without requiring GPT-4 to see any images

- Simple yet effective architecture: Demonstrates that a linear projection layer connecting a frozen CLIP ViT-L/14 vision encoder to a Vicuna LLM is sufficient for strong multimodal instruction-following, establishing the "visual encoder + projection + LLM" blueprint

- Two-stage training recipe: Stage 1 aligns vision-language features by training only the projection layer on filtered CC3M caption data (595K image-text pairs); Stage 2 fine-tunes the full LLM end-to-end on 158K instruction-following samples

- LLaVA-Bench evaluation: Introduces a GPT-4-based evaluation protocol for open-ended visual instruction-following, measuring relative quality against GPT-4's own responses

- ScienceQA state-of-the-art: Achieves 92.53% on ScienceQA through a late-fusion ensembling strategy with GPT-4, demonstrating complementary strengths between visual grounding and textual reasoning

Architecture

┌──────────────────────────────────────────────────────────┐

│ LLaVA Architecture │

│ │

│ Image User Instruction │

│ │ │ │

│ ▼ │ │

│ ┌──────────────┐ │ │

│ │ CLIP ViT-L/14│ (frozen) │ │

│ │ 224 x 224 │ │ │

│ └──────┬───────┘ │ │

│ │ Z_v (patch tokens) │ │

│ ▼ │ │

│ ┌──────────────┐ │ │

│ │Linear Project.│ W │ │

│ │ H_v = W·Z_v │ │ │

│ └──────┬───────┘ │ │

│ │ Visual tokens │ Text tokens │

│ │ (LLM dim) │ │

│ └─────────┬────────────┘ │

│ │ Interleaved sequence: │

│ │ [sys] [H_v] [instruction] [response] │

│ ▼ │

│ ┌────────────────────────────────┐ │

│ │ Vicuna (LLaMA fine-tuned) │ │

│ │ Autoregressive generation │ │

│ │ Loss on response tokens only│ │

│ └────────────┬───────────────────┘ │

│ ▼ │

│ Generated Response │

│ │

│ Training: │

│ Stage 1: Freeze ViT + LLM, train W only (595K CC3M) │

│ Stage 2: Freeze ViT, fine-tune LLM + W (158K instruct) │

└──────────────────────────────────────────────────────────┘

Architecture / Method

Model Architecture

LLaVA's architecture is intentionally minimalist, consisting of three components:

-

Vision Encoder: A pretrained CLIP ViT-L/14 operating at 224x224 resolution. The encoder produces a grid of visual patch tokens

Z_vfrom the input image. The vision encoder remains frozen throughout training. -

Linear Projection Layer: A single trainable linear layer

Wthat maps CLIP visual features into the word embedding space of the language model:H_v = W · Z_v, whereH_vare visual tokens with the same dimensionality as the LLM's text embeddings. -

Language Model: Vicuna (a fine-tuned LLaMA), which processes the interleaved sequence of projected visual tokens and text tokens autoregressively. The LLM is frozen in Stage 1 and fine-tuned in Stage 2.

The input to the LLM is a multimodal sequence: [system prompt] [visual tokens H_v] [user instruction] [assistant response]. The model is trained with standard autoregressive next-token prediction, with loss computed only on the assistant's response tokens.

Data Generation Pipeline

The instruction data generation uses GPT-4 (text-only) as a teacher:

- Input to GPT-4: Image captions and bounding box coordinates (symbolic representations, not actual images)

- Prompt engineering: Seed examples for each data type guide GPT-4 to produce diverse, contextual responses

- Three data types:

- Conversation (58K): Multi-turn Q&A about image content, requiring spatial understanding

- Detailed Description (23K): Rich paragraph-length descriptions of image content

- Complex Reasoning (77K): Multi-step reasoning chains about image content (e.g., inferring cause-effect, counting, spatial relationships)

Two-Stage Training

- Stage 1 -- Feature Alignment (4 hours on 8x A100): Only the projection matrix

Wis trained on 595K filtered CC3M image-caption pairs. Both the vision encoder and LLM are frozen. This aligns the visual feature space with the language embedding space. - Stage 2 -- End-to-End Fine-Tuning (10 hours on 8x A100): The LLM weights are unfrozen and fine-tuned jointly with the projection layer on the 158K GPT-generated instruction-following dataset. The vision encoder remains frozen.

Results

LLaVA-Bench Evaluation

LLaVA is evaluated on two custom benchmarks using GPT-4 as a judge, scoring model responses relative to GPT-4's own reference answers:

| Model | LLaVA-Bench (COCO) | LLaVA-Bench (In-the-Wild) |

|---|---|---|

| LLaVA | 85.1% | 67.3% |

| BLIP-2 | 65.0% | -- |

| OpenFlamingo | 37.1% | -- |

Instruction tuning improved LLaVA's relative score by over 50 points compared to the base model without instruction tuning. On in-the-wild images, LLaVA outperformed OpenFlamingo by 48% and BLIP-2 by 29%. LLaVA achieves 81.7% relative score on complex reasoning within the In-the-Wild benchmark.

ScienceQA

| Method | Accuracy |

|---|---|

| LLaVA + GPT-4 (ensemble) | 92.53% |

| GPT-4 (text-only CoT) | 82.69% |

| LLaVA alone | 90.92% |

| Multimodal-CoT (prev. SOTA) | 91.68% |

The ensembling strategy routes questions to either LLaVA or GPT-4 based on answer agreement, achieving complementary gains from visual grounding (LLaVA) and textual reasoning (GPT-4).

Key Ablation Findings

- Data type matters: Complex reasoning data contributes more to performance than conversation or description data alone

- Projection layer: Even a simple linear projection is sufficient; the quality of instruction data matters more than projection complexity

- Scale of instruction data: Performance improves with more instruction-following samples, with diminishing returns beyond ~150K

Limitations & Open Questions

- Resolution bottleneck: CLIP ViT-L/14 operates at 224x224, severely limiting fine-grained visual understanding (reading text, counting small objects, spatial precision) -- subsequent work (LLaVA-1.5, LLaVA-NeXT) addressed this with higher resolution and dynamic tiling

- Hallucination: LLaVA can generate plausible-sounding but visually incorrect descriptions, a known issue with autoregressive VLMs that lack explicit visual grounding mechanisms

- Single-image limitation: No support for video or multi-image reasoning in the original formulation

- Evaluation limitations: GPT-4-based evaluation, while useful, is noisy and biased toward verbose, well-structured responses -- not necessarily accurate ones

- Data generation without visual grounding: GPT-4 generates instruction data from captions and bounding boxes, not actual images, which can introduce systematic biases in the training data

Connections

Related papers in the wiki:

- Learning Transferable Visual Models From Natural Language Supervision -- LLaVA uses CLIP ViT-L/14 as its vision encoder; CLIP's contrastive pretraining provides the visual representations that LLaVA projects into language space

- Attention Is All You Need -- The transformer architecture underlying both the CLIP encoder and the Vicuna LLM

- Language Models Are Few Shot Learners -- GPT-3 established the foundation for few-shot instruction-following that LLaVA extends to the visual domain

- Chain Of Thought Prompting Elicits Reasoning In Large Language Models -- LLaVA's complex reasoning data category explicitly trains multi-step visual reasoning, extending CoT to multimodal settings

- Bert Pre Training Of Deep Bidirectional Transformers For Language Understanding -- BERT's pretrain-then-adapt paradigm informs LLaVA's two-stage approach

- Palm E An Embodied Multimodal Language Model -- PaLM-E (2023) independently explored injecting visual tokens into LLMs for embodied tasks; LLaVA demonstrated a simpler, open-source recipe achieving broad visual instruction-following

- An Image Is Worth 16X16 Words Transformers For Image Recognition At Scale -- ViT established that pure transformers work for vision; CLIP ViT (used in LLaVA) is a contrastive variant of this architecture

- Rt 2 Vision Language Action Models Transfer Web Knowledge To Robotic Control -- RT-2 applies the VLM-to-action paradigm that LLaVA helped popularize, extending it from visual chat to robotic control

- Foundation Models -- LLaVA exemplifies the "connect pretrained modules with minimal glue" philosophy of foundation model adaptation

- Vision Language Action -- LLaVA's architecture (vision encoder + projection + LLM) became the standard backbone for VLA models in both robotics and autonomous driving