Denoising Diffusion Probabilistic Models

Citation

Ho, Jain, and Abbeel, NeurIPS, 2020.

Canonical link

Overview

Denoising Diffusion Probabilistic Models (DDPM) demonstrates that high-quality images can be generated by training a neural network to iteratively reverse a fixed Gaussian noise-addition process, yielding a simple MSE training objective that matches or beats GANs on image quality without adversarial training instabilities.

DDPM revived and popularized diffusion-based generative models, establishing the paradigm that now powers DALL-E, Stable Diffusion, and virtually all state-of-the-art image generators. The key insight is that the variational lower bound for the reverse diffusion process simplifies to predicting the noise epsilon added at each timestep, yielding a trivially simple training loop: sample image, sample timestep, add noise, predict noise, compute MSE loss. This elegance -- combined with GAN-competitive FID scores (3.17 on CIFAR-10) and completely stable training -- made diffusion the default generative modeling approach.

The paper's impact extends far beyond image generation. The diffusion framework has been adapted to video generation, 3D synthesis, audio, molecular design, and even trajectory planning in robotics and autonomous driving, making DDPM one of the most influential generative modeling papers of the decade.

Key Contributions

- Epsilon-prediction parameterization: Instead of predicting the denoised mean directly, the network predicts the noise epsilon that was added, which the paper shows is equivalent to optimizing the variational lower bound but empirically yields better sample quality

- Fixed forward process with linear noise schedule: q(x_t | x_{t-1}) adds Gaussian noise with variance beta_t linearly increasing from 0.0001 to 0.02 over T=1000 steps, enabling closed-form sampling of any x_t from x_0 via x_t = sqrt(alpha_bar_t) * x_0 + sqrt(1 - alpha_bar_t) * epsilon

- Connection between diffusion and score matching: The paper establishes that DDPM training is equivalent to denoising score matching, unifying the diffusion and score-based generative model perspectives

- U-Net architecture with timestep conditioning: Uses a U-Net with sinusoidal timestep embeddings, self-attention at 16x16 resolution, and group normalization, setting the standard backbone for diffusion models

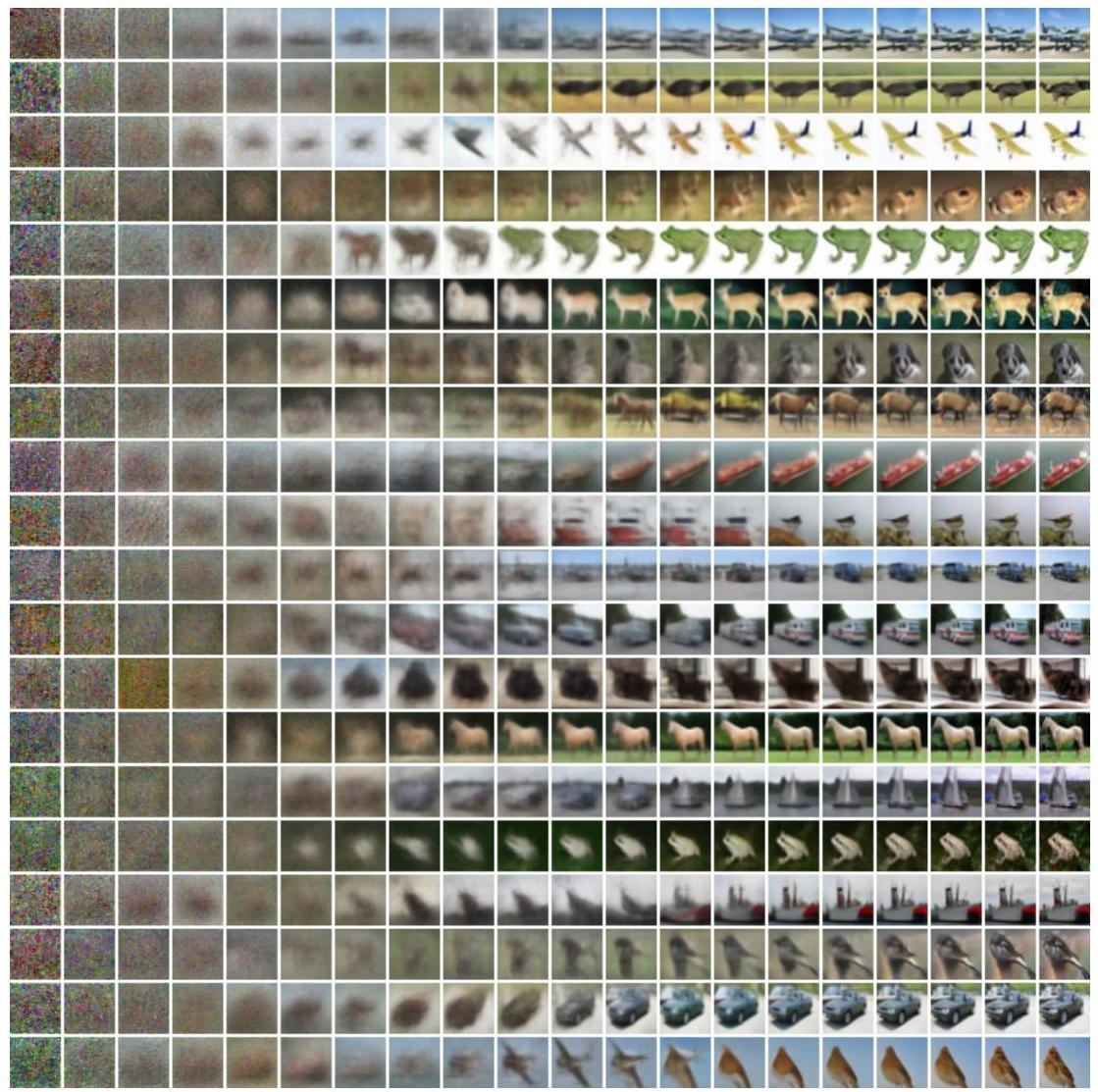

- Progressive generation from pure noise: Sampling iterates x_T -> x_{T-1} -> ... -> x_0, where each step applies the learned denoiser plus a noise injection, producing images that gradually sharpen from static

Architecture / Method

Forward Process (fixed, no learnable params):

x₀ ──────► x₁ ──────► x₂ ──────► ... ──────► x_T

(data) q(x_t|x_{t-1}) = add Gaussian noise (pure noise)

β₁=1e-4 ──────────────────► β_T=0.02

Shortcut: x_t = √(ᾱ_t)·x₀ + √(1-ᾱ_t)·ε, ε ~ N(0,I)

Reverse Process (learned):

x_T ──────► x_{T-1} ──────► ... ──────► x₁ ──────► x₀

(noise) p_θ(x_{t-1}|x_t) = denoise one step (image)

┌──────────────────────────┐

│ ε_θ(x_t, t) predicts │

│ the noise ε that was │

│ added at step t │

└──────────────────────────┘

U-Net Denoiser Architecture:

┌─────────┐ ┌─────────┐ ┌─────────┐

│ Down │ │Bottlenck│ │ Up │

│ Blocks │────►│ + Self- │────►│ Blocks │

│ (ResNet │ │ Attn at │ │ (ResNet │

│ +BN+skip│ │ 16x16) │ │ +BN+skip│

│ connect)│ │ │ │ connect)│

└─────────┘ └─────────┘ └─────────┘

▲ │

└──────── Skip Connections ──────┘

Timestep t ──► Sinusoidal Embedding ──► Added to each ResNet block

Training (one step):

1. Sample x₀ ~ data, t ~ Uniform{1..T}, ε ~ N(0,I)

2. Compute x_t = √(ᾱ_t)·x₀ + √(1-ᾱ_t)·ε

3. Loss = ‖ε - ε_θ(x_t, t)‖² (simple MSE)

The forward (noising) process is a fixed Markov chain that gradually adds Gaussian noise to a data sample x_0 over T=1000 steps: q(x_t | x_{t-1}) = N(x_t; sqrt(1 - beta_t) * x_{t-1}, beta_t * I), where beta_t increases linearly from beta_1 = 1e-4 to beta_T = 0.02. A key property is that any intermediate x_t can be sampled directly from x_0 in closed form: x_t = sqrt(alpha_bar_t) * x_0 + sqrt(1 - alpha_bar_t) * epsilon, where alpha_bar_t = product_{s=1}^{t} (1 - beta_s) and epsilon ~ N(0, I).

The reverse (denoising) process learns to undo each noise step: p_theta(x_{t-1} | x_t) = N(x_{t-1}; mu_theta(x_t, t), sigma_t^2 * I). The mean mu_theta is parameterized as a function of the predicted noise: mu_theta(x_t, t) = (1/sqrt(alpha_t)) * (x_t - (beta_t / sqrt(1 - alpha_bar_t)) * epsilon_theta(x_t, t)). The variance sigma_t^2 is fixed to beta_t (not learned).

The denoising network epsilon_theta is a U-Net with: downsampling blocks (ResNet blocks + self-attention at 16x16), a bottleneck, and upsampling blocks with skip connections. Timestep t is encoded via sinusoidal embeddings (same as transformer positional encodings) and added to each ResNet block. Group normalization replaces batch normalization. Self-attention is applied only at the 16x16 resolution level to keep computation manageable.

Training minimizes the simplified objective L_simple = E_{t, x_0, epsilon} [||epsilon - epsilon_theta(sqrt(alpha_bar_t) * x_0 + sqrt(1 - alpha_bar_t) * epsilon, t)||^2], which uniformly weights all timesteps. Sampling generates images by starting from x_T ~ N(0, I) and iterating the reverse process for T steps.

Results

| Dataset | FID | IS | bits/dim |

|---|---|---|---|

| CIFAR-10 (32x32) | 3.17 | 9.46 | 3.75 |

| LSUN Bedroom (256x256) | 4.90 | - | - |

| LSUN Church (256x256) | 7.89 | - | - |

| LSUN Cat (256x256) | 19.75 | - | - |

- State-of-the-art unconditional generation: FID of 3.17 on CIFAR-10 (32x32), surpassing most existing GAN variants, and IS of 9.46, both without any adversarial training

- Training is completely stable: Unlike GANs, there is no mode collapse, no discriminator-generator balancing act, and loss curves decrease monotonically -- the paper reports no training failures

- Lossless compression connection: The variational lower bound yields a bits-per-dimension of ≤3.75 on CIFAR-10, competitive with autoregressive models, showing that diffusion models are also good density estimators. While log-likelihoods were not competitive with autoregressive models, this reflects inductive bias toward perceptually meaningful compression rather than exact pixel reconstruction -- the majority of bits encode imperceptible details while perceptually important features are captured efficiently

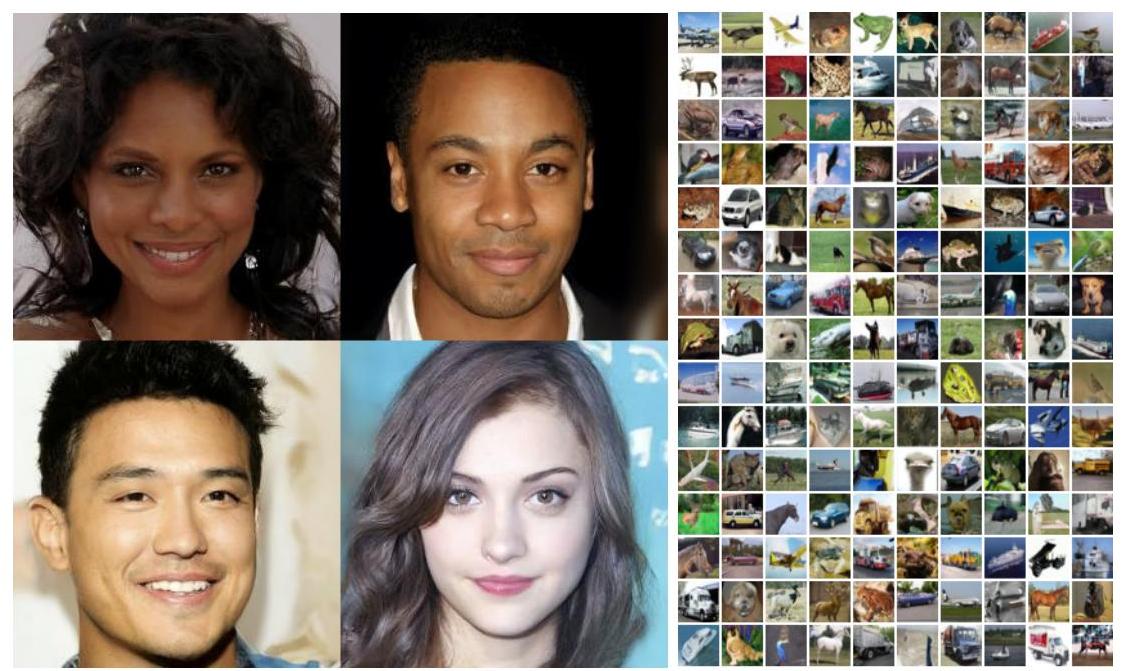

- High-quality 256x256 samples: On LSUN Bedrooms, Churches, and Cats, produces visually coherent samples competitive with ProgressiveGAN and StyleGAN

- Hierarchical progressive generation: Rate-distortion analysis reveals that early timesteps capture coarse image structure while later steps refine fine details, providing natural interpretability and synthesis control

- Critical parameterization choices: The epsilon-prediction parameterization was essential -- alternative parameterizations like directly predicting posterior means yielded significantly worse results. The simplified L_simple objective was crucial for sample quality despite being suboptimal for likelihood estimation

Limitations & Open Questions

- Sampling requires T=1000 sequential denoising steps, making generation orders of magnitude slower than GANs or VAEs; later work (DDIM, distillation) addresses this

- The linear noise schedule is suboptimal; subsequent work showed cosine schedules improve sample quality, especially for high-resolution images

- The paper evaluates only unconditional generation on relatively small images (32x32 CIFAR-10, 256x256 LSUN); class-conditional and text-conditional generation required further innovations (classifier guidance, classifier-free guidance)

Connections

- Machine Learning

- Diffusion Models Beat Gans On Image Synthesis -- Directly builds on DDPM, improving the U-Net architecture and introducing classifier guidance to surpass GANs on ImageNet for the first time

- Variational Lossy Autoencoder

- Attention Is All You Need

- Deep Residual Learning For Image Recognition